- Posted on

- Unique Submission Team

SECURE DATA ACQUISITION (CIS1006) ICA – PART 1

The python programming language is used for analyzing the data with several coding techniques and tools. The higher level of the language of Python is used to support the development in the practices with simple program maintenance.

This uses the information of the data for analyzing its impact on the differences in the data. With the increase in technology and computer growth, increased the number of networks and applications.

The data set of KDDCup99 is gathered from the MIT Lincoln Laboratory through permission and sponsorship. Defense Advanced Research Projects Agency sponsorship is used to evaluate the instruction system for detection in 1999 and 1998. The two datasets are used as the DARPA99 and DARPA98.

This data set includes tcpdump raw data for stimulation of the medium-sized air force base of the US. This data set is provided through the Knowledge Discovery and Data Mining Tools competition in the year 1999.

This data set is the transformation view of the tcpdump DARPA data. This includes the functioning and application of algorithms such as machine learning. This provides the appropriate classification of the operation of the algorithms using machine learning. This dataset consists of at least 42 elements of features related to the knowledge of networking and domain.

This is important to observe that the test of data is not equal to the distribution of probability as data for training. This also includes an inevitable attack of the data training plan the practical tasks. Some researchers and experts believe that the novel attack is an alternative to the known attacks.

The monogram of the known attacks can be sufficient to fasten the novel options (Guzdial and Ericson, 2016). The description of the features is provided under different heads. There 24 training types with the additional around 14 test data. The higher level of categorized feature helps in the differentiation of the normal circumstances from the attack.

Basic features: in this category, the information of the data summarizes the characteristics which are possible to gather from the IP or TCP connections (Diamond and Boyd, 2016). This delays the detection of the data due to its features.

Traffic features: in this category, the features of the data is calculated using the window interval, and it’s further distributed in two groups. The feature of the same host is used for the examination of the connections and information of the past 2 second’s changes (Rahmati et al. 2019).

This uses the connection of past 2 seconds from the same destination host of the current connection. This calculates the statistics related to the behaviour of service protocol. The feature of the same service is used for the examination of connection in the past 2 seconds, which was the current connection as the same service.

The above-mentioned types of features or of traffic features are stated as the time-based feature (Srinath, 2017). There are many slow searching attacks which check the pots using a considerable interval of time than two seconds.

In the result, this attack was not producing patterns for intrusion with two seconds time windows.

For solving this issue, the two features which are the same service and the same host are re-evaluated (Xu et al. 2016). Based on the connections of 100 connections excluding two seconds time windows. The above connections are stated as connection-based traffic features.

Content features: in contrast to the attacks of probing and DoS attacks, U2R and R2L do not consist of interruption sub sequential patterns.

In Python, data cleaning is used to categorize the information of the data gathered, which was from the dataset of KDDCup99. In the first step the data is extracted from the kddcup.data_10_percent.gz which is available on the online site of KDDCup99 data set (Sun et al. 2018).

This is then used to open in the application of jupyter. Several codes are used to categorize the data gathered under the 42 elements head.

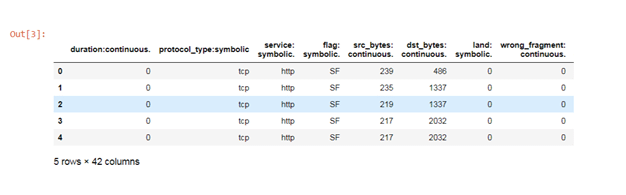

Then it uses the codes to read the form of the file using code “df = pd.read_csv”. After the cleaning of the data, there are 42 columns and five rows. The columns of the data are duration, protocol type, service, and so on.

In the codes, this uses “,” for separating the column with each other into 42 columns. G zip is used to state the type of the file uploaded which was not a CSV file. False code is used to ignore the errors in the codes of the data set. In the last step, the names= is used to provide names to the data in separate columns. (pdfs.semanticscholar.org, 2020)

Figure 2: exploratory set

(Source: Python)

After the cleaning of the dataset, “df.head()” is used to generate the cleaned data table of the data set provided using the codes. This shows the proper arrangement of the columns according to the occurrence of the events.

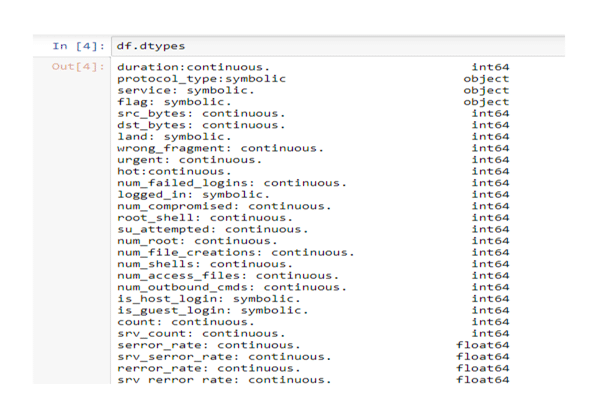

Figure 3: Types of data

(Source: Python)

Then “df.dtypes” is used to differentiate the data type of the dataset used. This divides into different types, such as int64, float64, object, and so on. The duration element in the data set is int64 which is in the integer form.

The elements such as src bytes, DST bytes, wrong, fragment, and so on are the int64 which shows the integer form of the data. The serror rate, SRV serror rate are in the way of float64. The float shows the nature of the numbers or values in the form of a decimal. (python.org, 2020)

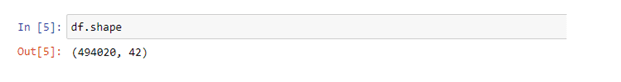

Figure 4: Shape of data

(Source: Python)

Df.shape code is used to provide the actual number of columns and rows of the dataset. The data set uses this code to count the exact number of columns and data numbers using Python. This is useful to observe the number of rows and columns in the data set.

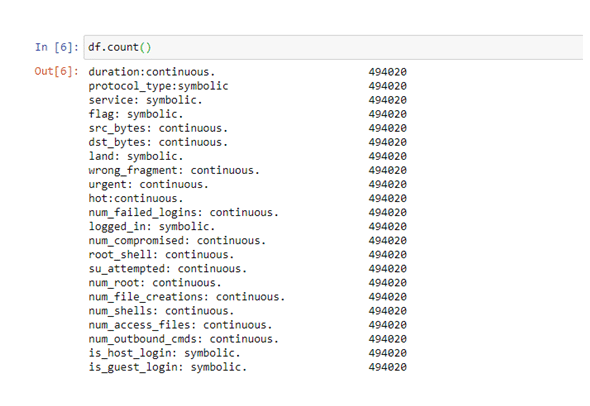

Figure 5: Count of data

(Source: Python)

“Df.count” is used to provide the counts of the data in each of the columns. This shows the number of data or information present in each head. This provides the actual number of data in the data sets of KDDCup99.

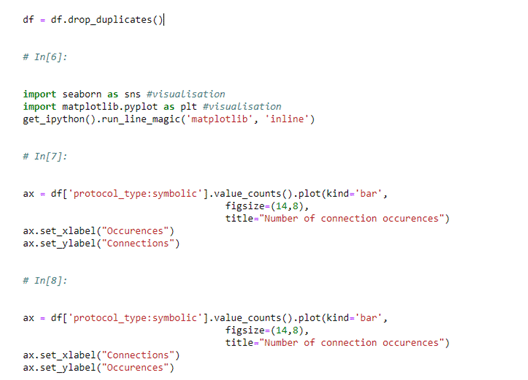

“df = df.drop_duplicates()” is used to delete the duplicate data or information in each of the elements. This is effectively used, as this shows the actual presentation of the data using codes in the application of Python.

This will delete the error of duplication in the data information provided. This is effectively used to provide the accuracy of the data for analyzing the actual outcome.

Figure 6: Visualization code

(Source: Python)

Seaborn and matplotlib are used to generate graphs and charts on the available data set in Python. In this step, Python uses the above codes for generating several graphs and data about the number of occurrences in the records.[Referred to Appendix 1]

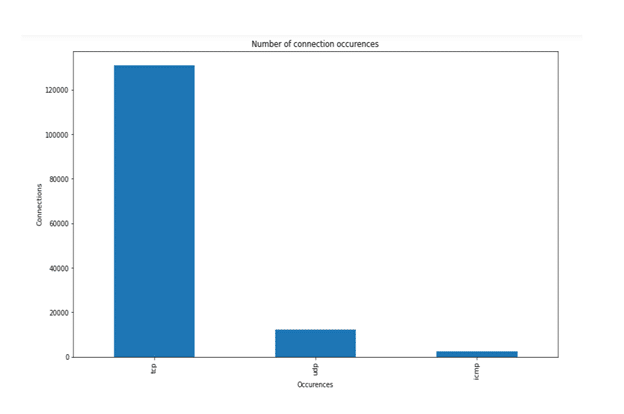

Figure 7: Number of connection occurrences

(Source: Python)

In the above chart, the number of connections is provided concerning the actual number of occurrences. This shows that the TCP occurrences are more than 120000 connections in the total data set.

This also indicates that the UDP occurrences are below 20000, whereas the occurrences are much lower in the case of ICMP connections. This indicates that the TCP occurrences are higher than other occurrences concerning the number of connections.

Therefore, this can be observed that this increasing number of connections are mostly related to the TCP occurrences than the UDP and ICMP occurrences.

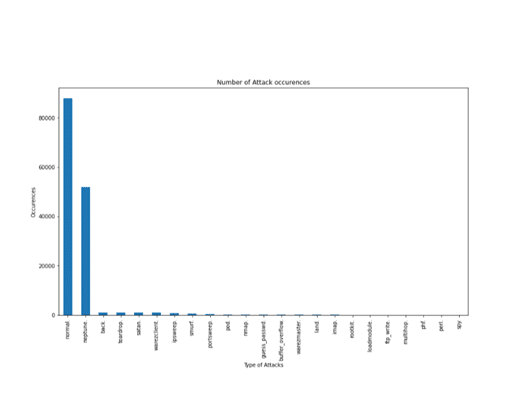

Figure 8: Number of attack occurrences

(Source: Python)

The above graph generated by using code in Python shows the actual number of occurrences of the attacks. The normal type of attack mostly occurs in the data set, which is more than 80000. The Neptune type of attack has been observed to be the highest after the normal type of attack. This type of attack is between 40,000 to 60,000 occurrences.

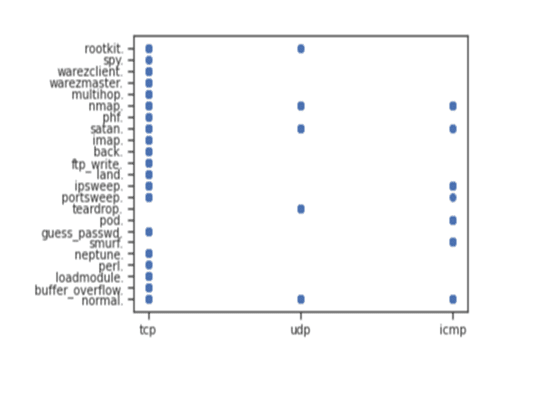

Figure 9: Number of attack occurrences type

(Source: Python)

In the above graph, it shows the relation of the type of occurrences and attacks. This shows that the TCP type of occurrences is mostly present in all types of attacks. This shows the greater relationship among the TCP occurrences with the types of attacks.

This also shows the minimum relation of the UDP occurrences with some type of attacks. This provides that in some of the type of attacks such as a rootkit, normal and so on have a relation with the UDP occurrences.

In the above report, a python application is used to provide several codes to analyze the relationship between the KDDCup99 data set. This shows the relationship of the 42 elements with each other.

The report focuses on the relationship of the occurrences types and numbers with the kind of attacks. This shows an accurate relationship explanation on the type of attacks. This also shows the cleaning and exploratory methods for analyzing the dataset.

This shows the cleaning steps in the data set for removing the unwanted data and arranging into different columns. This shows the effective use of python application in the data analysis of the type of attacks and occurrences.

This shows the evaluation of the relationship using the codes of the Python for generating charts and graphs.

Book

Guzdial, M. and Ericson, B., 2016. Introduction to computing and programming in python. UK: Pearson.

Journals

Diamond, S. and Boyd, S., 2016. CVXPY: A Python-embedded modeling language for convex optimization. The Journal of Machine Learning Research, 17(1), pp.2909-2913.

Rahmati, O., Moghaddam, D.D., Moosavi, V., Kalantari, Z., Samadi, M., Lee, S. and Tien Bui, D., 2019. An automated python language-based tool for creating absence samples in groundwater potential mapping. Remote Sensing, 11(11), p.1375.

Srinath, K.R., 2017. Python–The Fastest Growing Programming Language. International Research Journal of Engineering and Technology (IRJET), 4(12), pp.354-357.

Sun, Q., Berkelbach, T.C., Blunt, N.S., Booth, G.H., Guo, S., Li, Z., Liu, J., McClain, J.D., Sayfutyarova, E.R., Sharma, S. and Wouters, S., 2018. PySCF: the Python‐based simulations of chemistry framework. Wiley Interdisciplinary Reviews: Computational Molecular Science, 8(1), p.e1340.

Xu, Z., Zhang, X., Chen, L., Pei, K. and Xu, B., 2016, November. Python probabilistic type inference with natural language support. In Proceedings of the 2016 24th ACM SIGSOFT International Symposium on Foundations of Software Engineering (pp. 607-618).

Online articles

pdfs.semanticscholar.org, (2020), python language, available at: https://pdfs.semanticscholar.org/8d5d/3f2b6d01df02faffc44aee0fb2c44d7f62b3.pdf [accessed on 15.02.2020]

Websites

python.org, (2020), python language, available at: https://www.python.org/[accessed on 15.02.2020]

Appendix 1: visualization codes

(Source: Python)